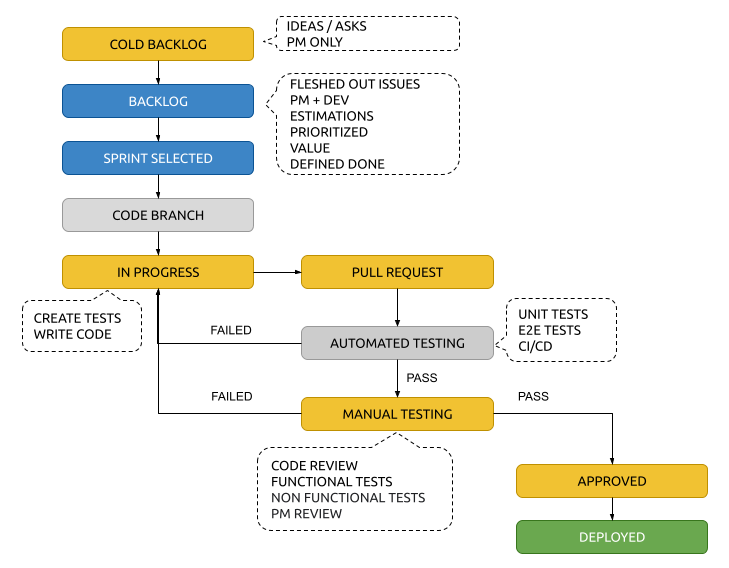

When developing software in an agile environment, how you tickets (or issues) flow is very critical to manage how the team is doing. In my experience the workflow above is how I manage it. This builds on the roles and concepts presented in my post Effective Software Development with Agile SCRUM

Cold Backlog

The Cold Backlog is Emergent. As the product backlog evolves, it’s easy to add new stories and items—or remove them—as new information arises. Nothing is permanent and nothing is ready to start development.

Backlog

The Backlog is a collection of stories that have been completed to the standard of the Backlog Grooming session.

Items in the backlog meet the following criteria:

- Well-formed User Stories (As a PERSONA, I want to SOMETHING so that RESULT)

- Effort estimation as a collaboration between the Engineering team members and the Product Owner

- Definition of Ready captures the shared understanding of the steps a team needs to take to ensure a requirement is well-defined, and a team can pull it into their next sprint.

- The Definition of Done captures the shared understanding of a team about what “done” means to them.

- Secondary information could include: Priority, Urgency, Severity or Customer Value – each defined by the Product Owner

Sprint Selected

Items in the Sprint Selected category have an estimated effort that is in alignment with the amount of velocity a team can produce in the allotted time-box of their Sprint. The amount of items selected will be directly relate to the time of the Sprint and should not be dictated to the engineering team.

Code Branch

While not a status, once a ticket is selected to be worked on by an engineer, a Code Branch should be created in alignment with the issue. This allows for easy track of code to issues via the URL.

Example: feature-123 or bugfix-444

In Progress

In progress tickets have two main activities:

- Creating Tests – before coding, all issues should define testing based on the Definition of Done. Initially, tests will all fail. Your future-self cannot be trusted. Test are one way to make sure your future-self does the right thing.

- Writing Code

A note on Git commits.

Well-structured and well-written git commits ensure your code is maintainable, approachable and can be easily debugged. Whether you’re a seasoned veteran or brand new to an open-source community, writing quality git commits is an essential step towards getting your changes merged. When writing commits, use the present imperative tense — for example, use “Fix dashboard typo” instead of “Fixed” or “Fixes.”

Excellent commits have two attributes:

- Each commit only does one thing

- Each commit message is its own self-contained story

Pull Request

Once coding is completed and all tests are satisfied, engineers should create a Pull Request into the main (or dev) branch.

Well formed Pull Requests should include:

- The purpose of this Pull Request. For example:

This is a spike to explore…

This simplifies the display of…

This fixes handling of…

Use the present imperative tense — for example, use “Fix dashboard typo” instead of “Fixed” or “Fixes.” - Be explicit about what feedback you want, if any: a quick pair of :eyes: on the code, discussion on the technical approach, critique on design, a review of copy.

- Be explicit about when you want feedback, if the Pull Request is work in progress, say so. A prefix of “[WIP]” in the title is a simple, common pattern to indicate that state.

- @mention individuals that you specifically want to involve in the discussion, and mention why. (“/cc @jesseplusplus for clarification on this logic”)

- @mention teams that you want to involve in the discussion, and mention why. (“/cc @github/security, any concerns with this approach?”)

- Include steps to test the change

- Include video, screenshots or any other important elements

Automated Testing

Automated testing should happen automatically when a Pull Request is created or updated. This testing will run all the tests created as part of the In Progress step. This can include: Unit Test, End-To-End (e2e) and another DEVOPS steps to ensure that the code is bug free and meets the requirements of the ticket.

If the automated testing fails, the engineer assigned should be notified and the ticket returned to the In Progress phase.

Manual Testing

Manual testing should include all the following activities, all completed by someone other than the engineer who wrote the code. If any of the manual testing fails, the engineer assigned should be notified and the ticket returned to the In Progress phase.

Code Reviews

Good code reviews look at the change itself and how it fits into the codebase. They will look through the clarity of the title and description and “why” of the change. They cover the correctness of the code, test coverage, functionality changes and confirm following the coding guides and best practices. Furthermore, they will point out obvious improvements, such as hard to understand code, unclear names, commented out code, untested code or unhandled edge cases. They will also note when too many changes are crammed in one review, suggesting keeping code changes single purposed and breaking the change into more focused parts.

Keep the tone positive!!

Good code reviews ask open-ended questions over making strong or opinionated statements. They offer alternatives and possible workarounds that might work better for the situation. These reviews assume the reviewer might be missing something and ask for clarification instead of correction, at first.

Better code reviews are also empathetic. They know that the person writing the code spent a lot of time and effort on this change. These code reviews are kind and unassuming. They applaud nice solutions and are all-round positive.

Functional testing

- Unit testing

- Functions

- Accessibility

- Smoke testing

- Integration testing

Many functional tests will be designed around given requirement specifications – meeting business requirements is a vital step in designing any application. For instance, a requirement for an e-commerce website is the ability to buy goods.

A practical example of this is: when a customer checks out of a shopping basket, they should be sent to a secure payment page, then to bank security verification, and then they should receive a confirmation email. Functional testing verifies that each of these steps works.

Non-functional testing

- Performance testing

- Load testing

- Reliability

- The readiness of a system

- Usability testing

A practical example would be: checking how many people can simultaneously check out of a shopping basket.

Not every type of software test falls neatly into these two categories, however – for instance, regression testing could be considered both depending on how the tests are run.

Product Manager Review

Review (or demo, demonstration) is a fundamental Scrum ceremony providing a visibility of results to a product owner, stakeholders or anyone invited. For an agile team, a review is a key event, as the commitment they agreed on must be demonstrated as a real functionality. This is the opportunity for final approval from the primary stakeholders.

Approved

Issues in the Approved state have satisfied all the requirements set forth in the Definition of Done and approved by the Product Manager. While these issues are technically completed, they have not yet moved into production and are therefor not closed as Deployed. These issues are typically assigned to the DEVOPS or deployment teams to complete.

Deployed

These issues are effectively closed as completed.

Discover more from AJB Blog

Subscribe to get the latest posts sent to your email.